Article Summary

AI search optimization is the practice of making a brand visible, accurately represented, and recommended across AI search platforms — ChatGPT, Perplexity, Gemini, Google AI Overviews, and Claude. Optimist’s Complete Organic Revenue Engine (CORE) Framework is an integrated SEO + AEO methodology that maps optimization to four buyer journey stages and executes across eight implementation areas. Entity & Brand Consistency, Narrative Clarity, Content Coverage, Content & Cluster Architecture, On-Page Foundations, Content Structure & Extractability, Evidence & Citation Signals, and Schema & Structured Data.

I get this question every week from prospects: “How do we get ChatGPT to mention us?”

AI search optimization is the practice of making a brand visible, accurately represented, and recommended across AI search platforms like ChatGPT, Perplexity, Gemini, Google AI Overviews, and Claude. The companies doing it well are driving measurable pipeline from AI-sourced traffic. The companies ignoring it are losing deals before prospects ever visit their website.

Here is the core problem with most AI search optimization advice: it treats the discipline as a collection of tactical tricks. Add FAQ schema. Restructure your headers. Sprinkle in some statistics.

Those tactics matter, but without a strategic framework tying them together, they produce scattered results.

The companies seeing real pipeline from AI search treat it as a unified discipline, integrated with SEO, and mapped to the buyer journey.

Why AI Search Optimization Is a Pipeline Problem, Not a Visibility Problem

AI search optimization matters because B2B buyers now build vendor shortlists inside AI conversations before they ever visit a website. The companies that show up in those conversations generate pipeline. The companies that don’t get skipped entirely.

Here is how fast this shift happened. According to Forrester’s Buyers’ Journey Survey (2024), 89% of B2B buyers had already adopted generative AI as one of their top sources of self-guided information across every phase of the buying process. According to G2, 50% of B2B software buyers now start their vendor research in an AI chatbot. Two years ago, neither of those numbers existed. This is not a trend to watch. It is current buyer behavior.

The traffic these AI platforms send is also dramatically more valuable. Research from Semrush (June 2025) found that the average LLM visitor converts at 4.4x the rate of the average organic search visitor. The volume is smaller, but think about what is happening on the other side of that click: a buyer has described their problem to ChatGPT, received a recommendation, and clicked through already knowing who you are and what you do.

That is a completely different visitor than someone scanning page-one blue links.

But strong SEO performance does not automatically produce AI visibility.

Only 12% of ChatGPT-cited URLs rank in Google’s top 10 (Gracker.ai / Profound research). A company ranking #1 in Google for a target keyword may not get mentioned once when a buyer asks the same question in ChatGPT. The two channels are correlated but not equivalent.

Optimizing for AI Search: A Comprehensive Approach

Optimist’s Complete Organic Revenue Engine (CORE) Framework integrates SEO and AEO objectives at every stage of the buyer journey. It maps what needs to happen across 4 stages and executes through 8 implementation areas.

CORE Framework: 8 Implementation Areas

Execution spans 8 implementation areas:

- Entity & Brand Consistency — Entity disambiguation, consistent positioning, cross-property alignment, E-E-A-T signals

- Narrative Clarity — Problem-solution throughlines, scenario specificity, information gain

- Content Coverage — Topic universe coverage, commercial/comparison content, fan-out query targeting

- Content & Cluster Architecture — Internal linking, hub-and-spoke structure, funnel-stage content mapping

- On-Page Foundations — Title/meta, heading hierarchy, content depth, technical accessibility

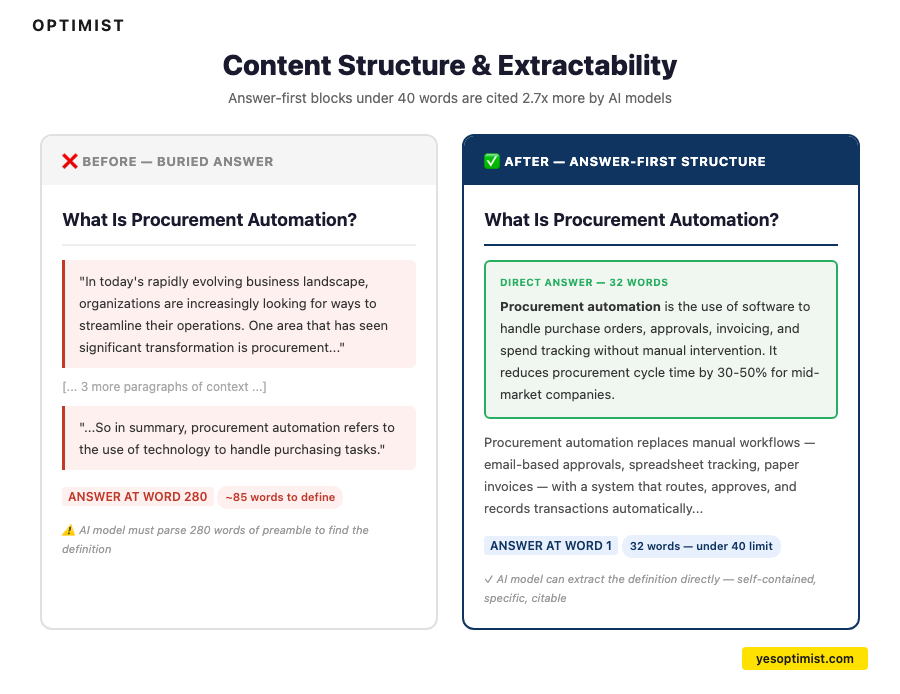

- Content Structure & Extractability — Answer-first formatting, self-contained answer blocks, AEO content types

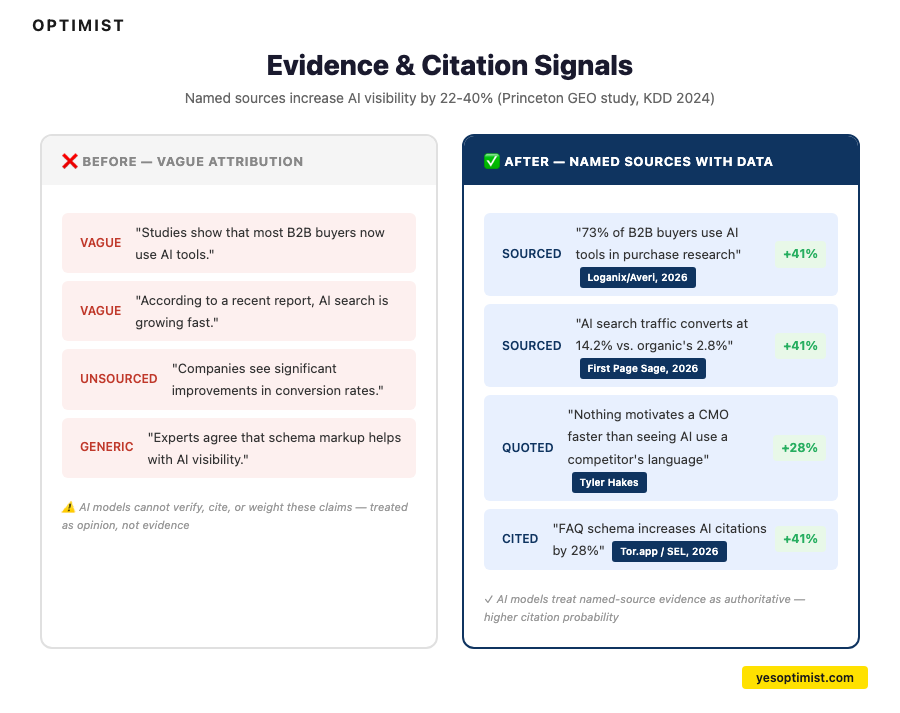

- Evidence & Citation Signals — Named-source statistics, expert quotes, authoritative citations, original data

- Schema & Structured Data — JSON-LD markup, freshness signals, entity-consistent structured data

The first four areas are strategic.

They determine what AI models understand about a brand and how the content ecosystem works as a whole.

The second four are execution-focused.

They determine how individual pages perform in both search and AI responses.

All factors work together.

Why SEO Still Matters for AI Search

Google AI Overviews cite top-10 sources 85.79% of the time, according to Semrush research. For Google’s AI layer specifically, SEO performance is still a strong predictor of citation.

And according to the same Semrush study, 88% of informational queries now trigger AI Overviews, meaning the SEO-to-AI-citation pipeline is active on nearly every query your buyers search for.

This is the premise behind The CORE Framework.

Focusing on only SEO or only AEO leaves major gaps and creates conflicts in your overall organic growth strategy.

Instead, these two approaches should work together.

Well-structured content that earns AI citations also ranks better in search. Named-source evidence that AI models trust also builds E-E-A-T signals. Entity clarity that helps AI models describe a brand accurately also reduces messaging confusion for human visitors.

Here’s how to integrate these factors into a comprehensive AI search optimization plan.

1. Entity & Brand Consistency

Entity & Brand Consistency is the foundation.

If AI models cannot confidently identify, categorize, and describe a brand, no amount of content optimization will produce accurate recommendations.

The first time Optimist ran a brand consistency audit across a client’s owned properties and third-party profiles, fewer than 20% had consistent positioning language across all three. The fix is tedious and unsexy. It is also the single highest-impact AEO action most companies can take.

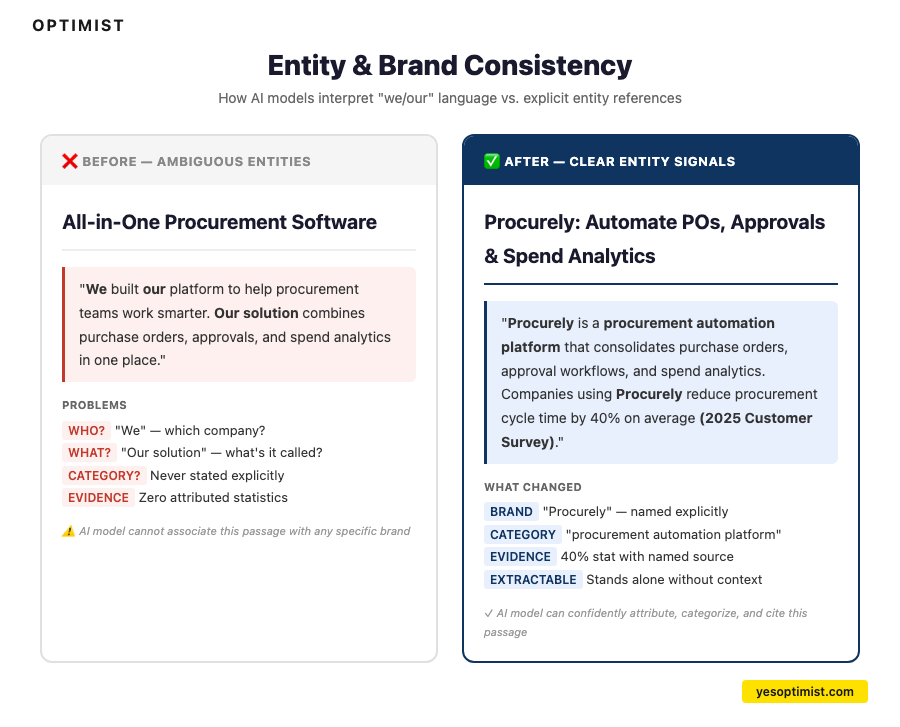

Entity Disambiguation: The Invisible Lever

This is one of those optimizations that sounds almost too simple to matter. AI models build entity graphs from content. When language is ambiguous (pronouns, “we,” “our solution,” interchangeable terms for the same product) it becomes harder for AI to associate claims with the correct brand. In Optimist’s AEO audits, entity ambiguity is one of the most common issues, and one of the cheapest to fix.

Entity disambiguation is straightforward to implement:

- Use third-person brand references: “[Brand] offers X” instead of “We offer X.” Every page should be extractable without ambiguity about who is being described.

- Use the exact same brand name, product name, and category terms every time. Do not alternate between “the platform,” “the tool,” “our solution,” and the actual product name.

- Make category membership explicit: “[Brand] is a [category] that [does what].” Do not make AI models infer what your company does.

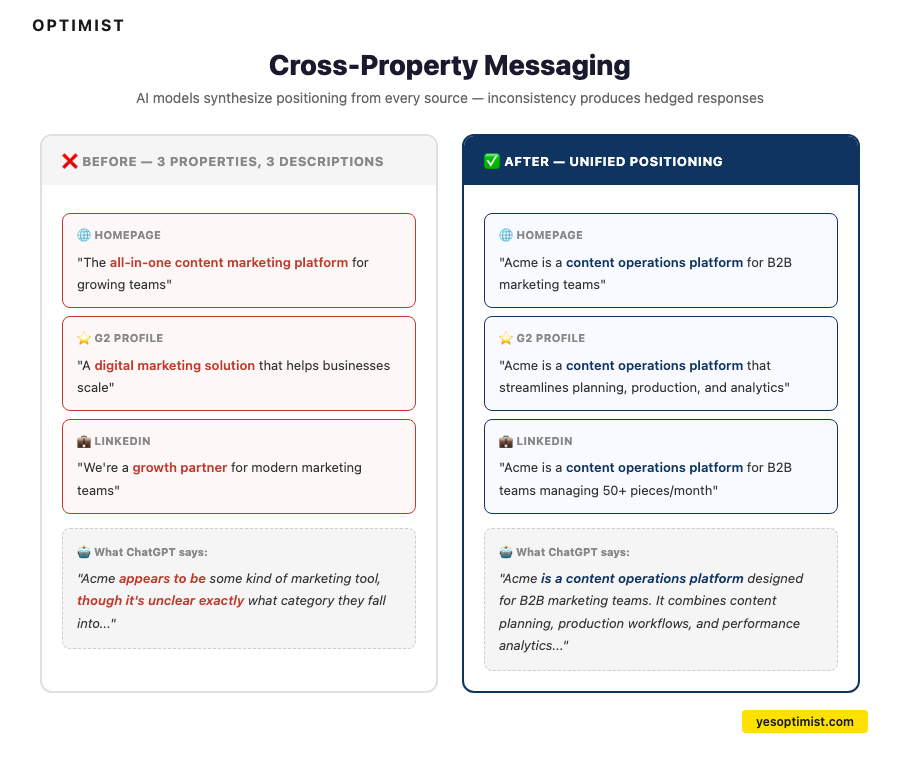

Cross-Property Messaging Alignment

When AI models encounter conflicting positioning signals (one page says “content marketing agency,” another says “SEO consultancy,” a third says “growth partner”) they lose confidence in describing the brand accurately. Inconsistent language produces vague, hedged AI responses that do not drive pipeline.

The AI is not confused. It is accurately reflecting the confusion in their own messaging.

AI platforms scan for agreement across multiple independent sources. If positioning is consistent across a company’s website, G2 profile, LinkedIn, industry publications, and Wikipedia, AI gains confidence in recommending that company. This is the consensus signal. It is why two companies with similar products can have wildly different AI recommendation rates.

This means the same positioning language should appear on the homepage, the G2 profile, the LinkedIn company page, the product pages, and the pitch deck.

Half the B2B SaaS sites Optimist audits describe themselves differently across these properties. The AI is not confused. It is accurately reflecting the confusion in the brand’s own messaging.

Third-party grounding means being present on the high-authority sites LLMs commonly cite like G2, Capterra, industry directories, Wikipedia (if notable enough). If a brand is absent or inconsistently described across these sources, AI models hedge.

E-E-A-T signals support AI confidence. Author credentials on bylined content, sourced statistics, transparent business details, and first-hand experience through case studies and original research.

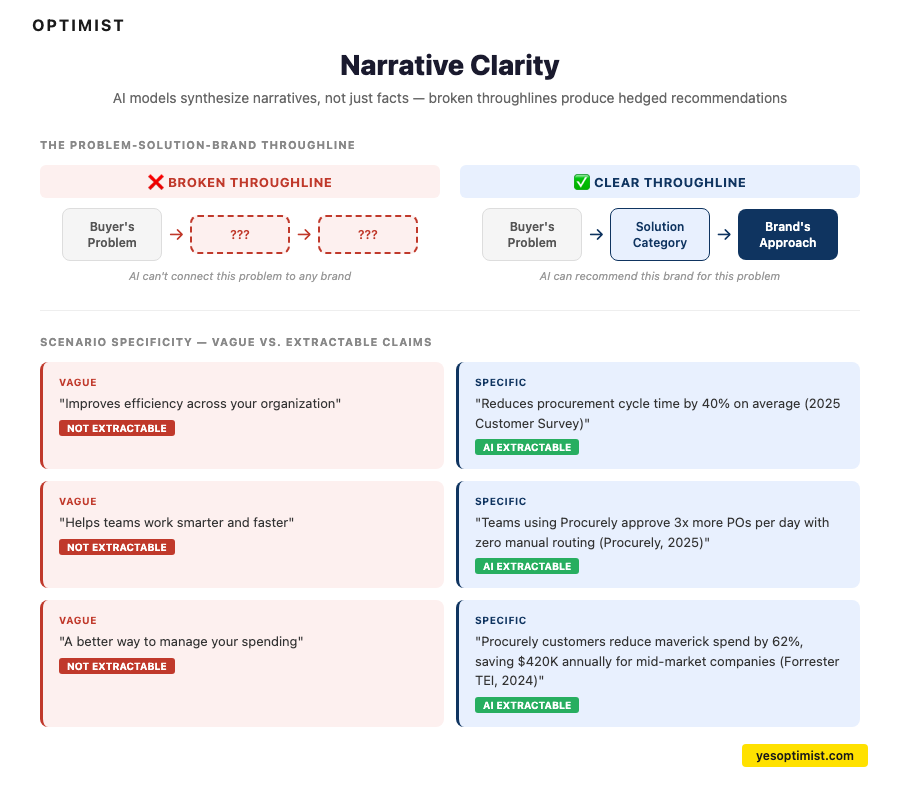

2. Narrative Clarity

AI models do not just extract facts. They synthesize narratives. If a brand’s content does not connect the buyer’s problem to the solution category to the brand’s approach, AI models cannot do it for the brand.

In Optimist’s work with B2B SaaS clients, the pattern is always the same: the homepage says one thing, the blog says another, and the sales deck says a third. AI models cannot synthesize a coherent brand narrative from contradictory inputs. They default to hedging or, worse, pulling the narrative from a competitor whose messaging is cleaner.

Problem-solution throughlines mean each content piece establishes a clear path from the buyer’s problem to the solution category to the brand’s approach. When a buyer describes their pain to an AI model, the brand should surface as part of the answer.

Scenario specificity matters more than most companies realize. “Reduces procurement cycle time by 40%” is extractable and citable. “Improves efficiency” is not. AI models favor specific, quantified claims over abstract value propositions.

Information gain is the Princeton GEO study’s term for content that provides something the top-ranking competitor pages don’t: a unique angle, original data, deeper specificity, or a proprietary framework. The Princeton research found that content with novel insights receives measurably higher AI visibility (Aggarwal et al., KDD 2024).

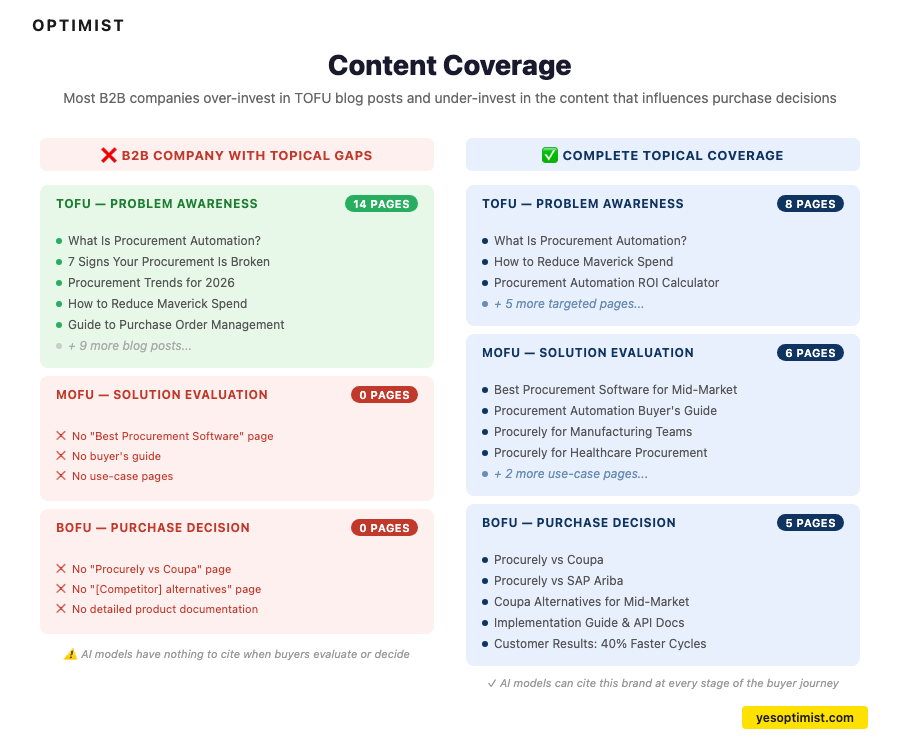

3. Content Coverage

Gaps in content coverage mean gaps in visibility. If a brand does not have a page for a topic its buyers care about, that brand cannot rank for it and AI models have nothing to cite.

Most B2B companies have massive content gaps in the places that matter most: Comparison pages, alternative pages, buyer’s guide content, detailed product documentation.

They have invested in top-of-funnel blog posts for years but have zero content for the queries where buyers are making decisions. The MOFU and BOFU content that directly influences vendor selection is the content most companies have not built.

Commercial and comparison content includes “best X for Y” pages, “[Brand] vs [Competitor]” pages, “[Competitor] alternatives” pages, and buyer’s guides. These are the queries where AI models make recommendations, and they are the queries most companies have ignored.

Fan-out query targeting is the AI-native equivalent of “People Also Ask.” When AI models answer a buyer’s initial question, they generate downstream questions. Content must exist for those follow-up queries too, or the brand drops out of the conversation one question in.

4. Content & Cluster Architecture

How content connects across a site affects both search crawlability and how AI models build topical maps of a brand’s authority.

Hub-and-spoke internal linking means pillar pages anchor each topic cluster, with supporting pages linked back to the hub using descriptive, keyword-relevant anchor text. Not “click here.” Anchor text that tells both search engines and AI crawlers what the destination page covers.

Each content piece should include 3-5 contextual internal links to relevant MOFU/BOFU pages: service pages, case studies, comparison pages, pricing. These commercial links are how search engines and AI models connect a brand’s educational content to its business offerings.

No orphan pages. Every page should be reachable within 3 clicks from the homepage. Pages disconnected from the site’s link structure are invisible to crawlers, both search engine crawlers and AI model crawlers.

Content type mapping by funnel stage means the right content types exist at each stage within each cluster: TOFU problem-stage content, MOFU evaluation content, BOFU decision content. A cluster with ten TOFU articles and zero comparison pages is leaving pipeline on the table.

5. On-Page Foundations

On-page SEO fundamentals have not changed, but the technical accessibility layer has.

Pages should still be optimized for traditional search, including:

- Title tag and meta description. Primary keyword in the title, compelling meta description, clean URL structure.

- Heading hierarchy. H1 containing the primary query, H2s covering distinct subtopics, H3s for supporting detail. No skipped levels.

- Content depth. 1,500-2,500 words minimum for competitive queries. AI models favor pages that cover a topic thoroughly enough to answer follow-up questions.

- Internal linking. Links to and from related content within a topic cluster model. AI crawlers follow internal links to build topical maps.

- Technical accessibility. Fast load time, mobile-responsive design, semantic HTML, canonical tags. The basics, executed consistently.

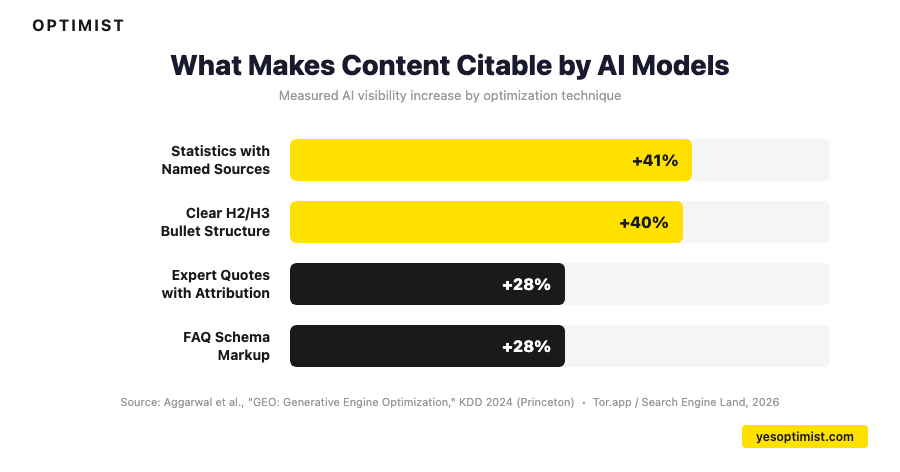

Pages with clear H2/H3/bullet structure are 40% more likely to be cited by AI models (Tor.app / Search Engine Land, 2026).

AI Bot Accessibility

If AI models cannot crawl a page, they cannot cite it. Verify these technical requirements:

- GPTBot, ClaudeBot, PerplexityBot, and Google-Extended are not blocked in `robots.txt`

- Content is server-rendered or pre-rendered. JavaScript-rendered pages may be invisible to AI crawlers.

- No login walls or aggressive interstitials blocking content access

- Semantic HTML. Proper use of `<article>`, `<section>`, and `<aside>` tags helps AI models parse content structure.

6. Content Structure & Extractability

Content structure directly determines whether AI models can extract and cite a brand’s content. This is where AEO-specific execution becomes most visible.

Answer-First Structure

For every primary query a page targets, provide a clear, concise answer in under 40 words immediately after the relevant heading. In Optimist’s analysis, short answer blocks under 40 words consistently outperform longer passages for AI citation on direct questions. The answer goes first. The expansion follows.

Self-contained answer blocks are the core unit of AEO content. Each key claim should be formatted as a standalone passage that makes complete sense if extracted out of context. AI models pull individual paragraphs from pages. If a claim requires three surrounding paragraphs to be understood, it will not get cited. No “as mentioned above.” No pronoun references to other sections. Each block stands alone.

AEO Content Blocks: Match the Format to the Query Intent

Different query types require different content structures. AI models match content format to question format:

- Definition blocks for “What is X?” queries. One sentence definition, one to two sentences of expansion, why it matters. Keep it under 80 words.

- Step-by-step blocks for “How to X?” queries. Overview sentence, three to five numbered steps, expected outcome. Each step should be actionable in a single sitting.

- Comparison tables for “X vs Y” queries. Side-by-side table with four or more comparison criteria, a “best for” row, and a bottom-line recommendation.

- FAQ blocks for long-tail questions. Natural question phrasing as the heading, direct answer in the first sentence, supporting context in 50-100 words total.

7. Evidence & Citation Signals

The Princeton GEO study (Aggarwal et al., KDD 2024) quantified what makes content citable by AI models:

- Statistics with named sources increase AI visibility by 41%. The pattern: “According to [Source], [specific stat with number and timeframe].” Not “studies show” or “according to a recent report.” Named sources change how AI models weight a passage.

- Expert quotes with attribution increase AI visibility by 28%. Name, role, and organization must all be present.

- Citing authoritative external sources increases AI visibility by up to 115% for lower-ranked content. This is the strongest single signal for pages outside the top 3.

- Original data tables earn 4.1x more AI citations than pages without original data (Tor.app / Search Engine Land, 2026). Proprietary research, survey results, or first-party analysis that AI models cannot find elsewhere receive preferential treatment.

The difference between “According to Forrester” and “according to a recent study” sounds trivial. But named sources change how AI models weigh the passage.

The evidence sandwich pattern structures major claims effectively. State the claim, provide 3+ sourced data points, then add a practitioner observation about what that evidence means in practice. A stat without interpretation is decoration. A stat with context is authority.

8. Schema & Structured Data

Schema markup provides machine-readable signals that help both search engines and AI models understand page content. It is not a silver bullet, but it is a meaningful multiplier.

Schema That Impacts AI Citation

- HowTo schema. In Optimist’s client work, pages with HowTo schema on tutorial and process content have consistently outperformed equivalent pages without it, with observed citation lifts around 1.5-2x. If a page teaches a method or process, this is the highest-impact schema to implement.

- FAQPage schema delivers measurable lifts for question-answer content. Every FAQ section should have corresponding schema.

- Article/BlogPosting schema with `datePublished`, `dateModified`, and `author` (with Person schema) helps AI models assess content freshness and credibility.

- Organization schema with `name`, `url`, `logo`, and `sameAs` for social profiles reinforces entity clarity.

- Speakable schema shows zero measurable impact on AI brand mention rates. Save the implementation effort.

More schema is not better schema.

The question is whether the markup helps an AI model understand what the page is about and whether the content behind it is worth extracting. If the content is thin, no amount of JSON-LD is going to save it.

Content Freshness Signals

AI models exhibit strong recency bias. According to Passionfruit research, AI-cited content is 25.7% fresher on average than traditionally ranked content, and 76.4% of ChatGPT’s top-cited pages were updated within the last 30 days.

Freshness signals that matter:

- Visible “Last Updated” date on the page

- `dateModified` in schema markup reflecting the actual last update

- Current-year statistics and examples throughout, not “in recent years” or undated claims

- Quarterly review cadence: flag content for refresh if data is more than 90 days stale

Sharp Healthcare saw an 843% click increase after implementing site-wide schema across key page types over 9 months (Schema App case study via Tor.app, 2026).

Getting Started with AI Search Optimization

Companies that start with entity consistency and MOFU/BOFU queries see results 3-4x faster than companies that start by restructuring their entire blog. Having run hundreds of AEO audits, the pattern is always the same. The sequence matters.

> “The most effective first move is almost never what people expect. It is not schema. It is not content structure. It is fixing the fact that your homepage, your G2 profile, and your LinkedIn page all describe your company differently. Fix that, and the AI models start getting you right across the board.” — Tyler Hakes, Strategy Director at Optimist

Step 1: Audit Current AI Visibility

Run 20-30 category queries across ChatGPT, Claude, Perplexity, Gemini, and Google AI Overviews. For each query, document: Is the brand mentioned? Is it accurately described? Is it recommended? Is the brand’s content cited? This baseline audit reveals where a brand stands today and identifies the highest-priority gaps.

Step 2: Assess Entity Consistency

Check whether the brand is described the same way across homepage, G2, LinkedIn, Capterra, and product pages. Look for discrepancies in category language, product descriptions, and positioning statements. Fix these first. Entity consistency affects how AI models describe the brand across every query, not just one.

Step 3: Prioritize by Pipeline Impact

Do not optimize everything at once. Start with the topics and queries where AI mentions have the most impact on purchase decisions. MOFU and BOFU queries drive pipeline directly. TOFU queries build awareness but take longer to influence revenue.

Step 4: Build the Integrated Roadmap

Use the CORE Framework to map SEO and AEO optimizations to each buyer journey stage. For each priority topic cluster, identify what content exists, what is missing, what needs restructuring for AI extractability, and what entity signals need reinforcement.

Step 5: Measure and Iterate

Monthly benchmark runs track progress on share of model response and brand accuracy. Quarterly re-assessments identify trends and emerging opportunities. The companies that treat AI search optimization as an ongoing discipline, not a one-time project, are the ones that compound results over time.

Quick Wins

These are the optimizations that produce the fastest measurable results on existing pages:

- Add a direct answer block (under 40 words) near the top of each priority page

- Add 3-5 attributed statistics per 1,000 words

- Replace “we” language with third-person entity language

- Add HowTo or FAQPage schema where appropriate

- Update the “Last Updated” date and `dateModified` in schema

- Verify AI crawlers are not blocked in `robots.txt`

Measuring AI Search Optimization Results

If there is one thing to take from this entire guide, it is this: AI search optimization should be measured by pipeline impact, not citation counts. Citation is a leading indicator. Pipeline and revenue are the metrics that matter. Getting mentioned by ChatGPT feels great. Getting pipeline from it is what pays for the work.

Optimist has seen teams celebrate a jump in “Share of Model Response” while their AI-referred pipeline stayed flat. Citation rate without conversion tracking is a vanity metric — it is the AEO equivalent of celebrating page views in 2015. The metric that matters is LLM-sourced pipeline: how many qualified opportunities originated from a buyer who found you through an AI conversation. Everything else is a leading indicator at best and a distraction at worst.

Key AI Search Metrics

- Citation rate by model. How often a brand appears in AI answers across ChatGPT, Claude, Perplexity, Gemini, and AI Overviews for target queries.

- Share of Model Response. The percentage of AI-generated answers in a category that mention or recommend a specific brand.

- Sentiment accuracy. Whether AI models describe the brand correctly: right category, right positioning, right differentiators.

- AI-referred traffic and conversions. Traffic from AI platforms (trackable in GA4 with proper attribution) and the conversion rate of that traffic.

- LLM-sourced pipeline and revenue. The business outcome metric. How much pipeline and closed revenue came from buyers who discovered the company through AI search.

Why AI Search Converts Better

AI-referred visitors convert at 4.4x the rate of traditional organic traffic (Semrush, June 2025). They arrive mid-research, with context and intent already established by the AI conversation. The buyer has already described their problem, received a recommendation, and clicked through to learn more. That is a completely different visitor than someone clicking a blue link on page one.

Pipeline attribution is harder with AI search. Buyers who discover a company through ChatGPT or Perplexity may show up as “direct” traffic in Google Analytics, not “organic.” An integrated measurement approach that combines AI citation monitoring with traffic analytics and CRM pipeline data is essential for accurate ROI calculation.

Let’s Build an AI Search Optimization Gameplan, Together

Optimist helps clients apply the CORE Framework to their business to drive increased SEO and AEO visibility, organic traffic growth, and inbound pipeline.

The CORE Framework in Action

Optimist has applied our framework across dozens of B2B technology engagements.

Three AEO case studies and dozens of SEO case studies illustrate what the approach produces when executed systematically.

Here are a few of our latest:

- B2B tech client: 49x LLM referral revenue in 14 months

- Fintech client: 8x LLM conversions in 8 months

- Retail client: 13x LLM-sourced revenue year over year

Schedule a Strategy Call

We’re here to help B2B SaaS and technology companies drive more organic pipeline and revenue.

If you’re looking for support on AEO strategy, planning, or execution, go ahead and reach out.

Schedule your strategy call with Optimist today to learn more about how we can help you take control of your AI visibility and map your path to measurable LLM-referred revenue.

Frequently Asked Questions

How do AI search engines choose what to cite?

AI search engines select citations based on content structure, authority signals, freshness, and entity clarity, not traditional ranking position. Only 12% of ChatGPT-cited URLs rank in Google’s top 10 (Gracker.ai / Profound research). In our experience, the single biggest factor is whether a passage can stand alone when extracted. Content that provides clear, self-contained answers with attributed statistics, expert quotes, and consistent brand language earns citations at significantly higher rates. The Princeton GEO research found that citations and source references increase AI visibility by 40%, expert quotes by 37%, and statistics with named sources by 22% (Aggarwal et al., KDD 2024).

Does SEO still matter for AI search optimization?

Yes, and it is not even close. Google AI Overviews cite top-10 sources 85.79% of the time (Semrush). Well-structured content that ranks in search is increasingly the same content that AI models cite. We have not seen a single client succeed at AEO without solid SEO foundations. The most effective approach, what Optimist calls the CORE Framework, optimizes for both simultaneously because the same signals (depth, authority, structure, evidence) drive performance in both channels.

How long does it take to see results from AI search optimization?

For existing content, optimizing against the 4-layer framework can produce measurable citation improvements in 4-6 weeks. Adding answer-first blocks, stat density, entity clarity, and schema to high-performing pages is the fastest path to results. For new content, AI models typically need 2-4 weeks to discover and start citing new pages. Sustained citation authority builds over 3-6 months of consistent optimization.

What is the difference between AEO, GEO, and AI search optimization?

AEO (Answer Engine Optimization), GEO (Generative Engine Optimization), and AI search optimization all describe the same discipline: optimizing content so AI-powered search platforms (ChatGPT, Perplexity, Gemini, Google AI Overviews) cite and recommend a brand. AEO is the most widely used term in B2B contexts. The terminology is converging, and the tactics are identical regardless of which term is used. Do not let the naming debate distract from execution.

How do you measure AI search optimization ROI?

Measure AI search optimization ROI through a layered approach: brand mention rate and share of model response (is the brand appearing in AI answers?), AI-referred traffic and conversion rate in GA4 (is that visibility driving website visits?), and LLM-sourced pipeline and revenue (are those visits converting to qualified leads and closed deals?). The last metric is the one that matters. AI-referred visitors convert at 4.4x the rate of traditional organic traffic (Semrush, June 2025), making the per-visit value substantially higher even if total volume is lower.

What schema markup helps with AI search optimization?

In Optimist’s client work, HowTo schema has produced consistent citation lifts of 1.5-2x for tutorial and process content. FAQPage schema delivers measurable lifts for question-answer content. Article/BlogPosting schema with `dateModified` and `author` information helps AI models assess content freshness and credibility. Speakable schema shows zero measurable impact and is not worth the implementation effort. All schema should be implemented in JSON-LD format and validated with Google’s Rich Results Test.